During the recent Arctic Cloud Developer Challenge Hackathon I was playing around with AI Builder for the first time. The scenario we were going to build on there was the detection of Good guy / Bad guy.

The idea was that the citizens would be able to take pictures of suspicious behavior. Once the picture was taken, the classification of the picture would let them know if it was safe or not.

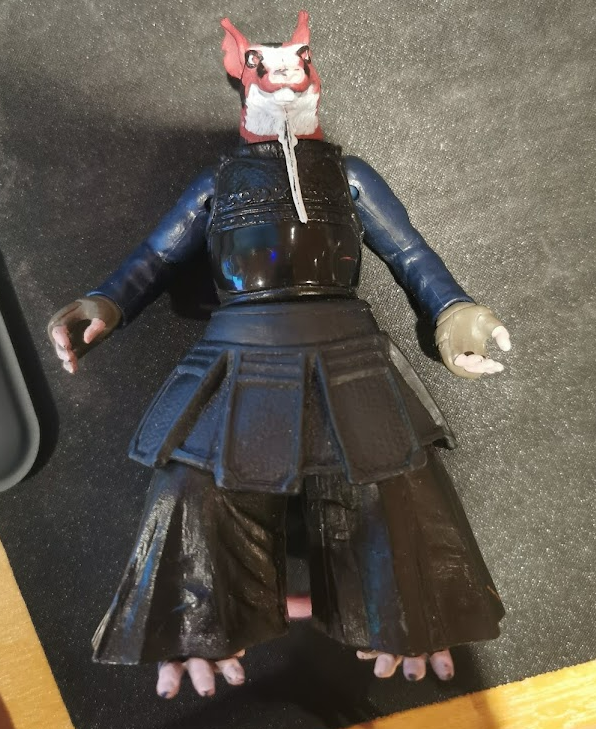

For this exercise I would use my trusted BadBoy/GoodBoy nephew toys:)

Lobe

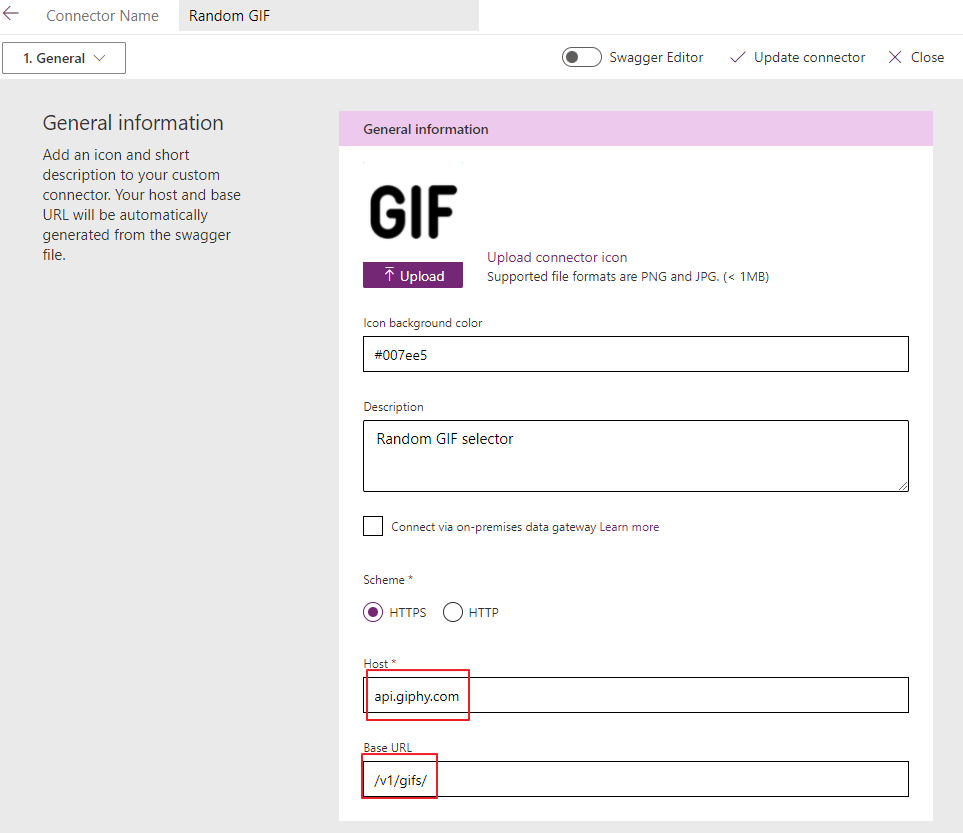

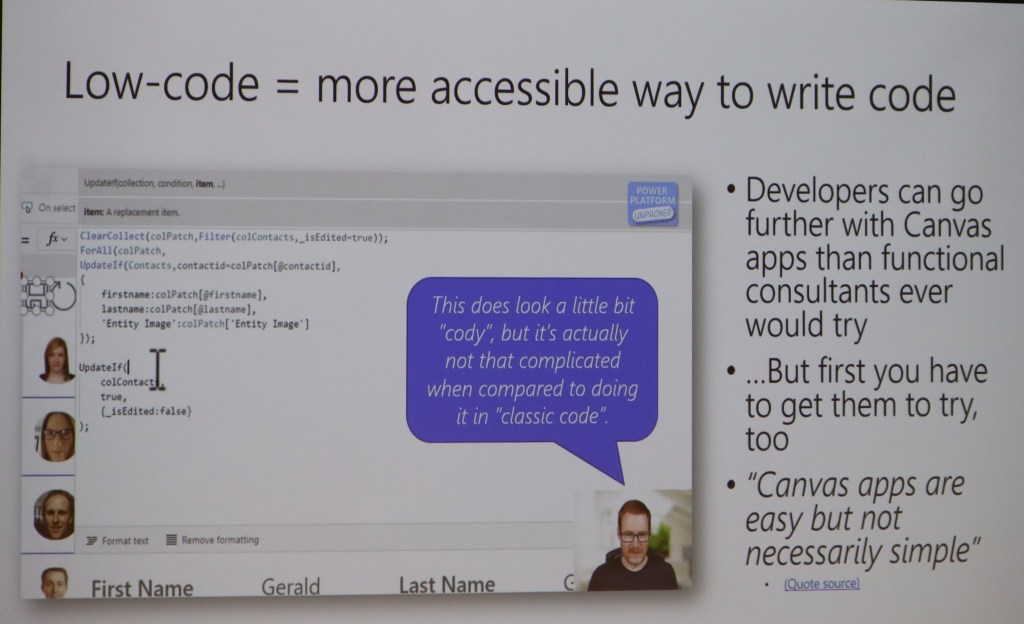

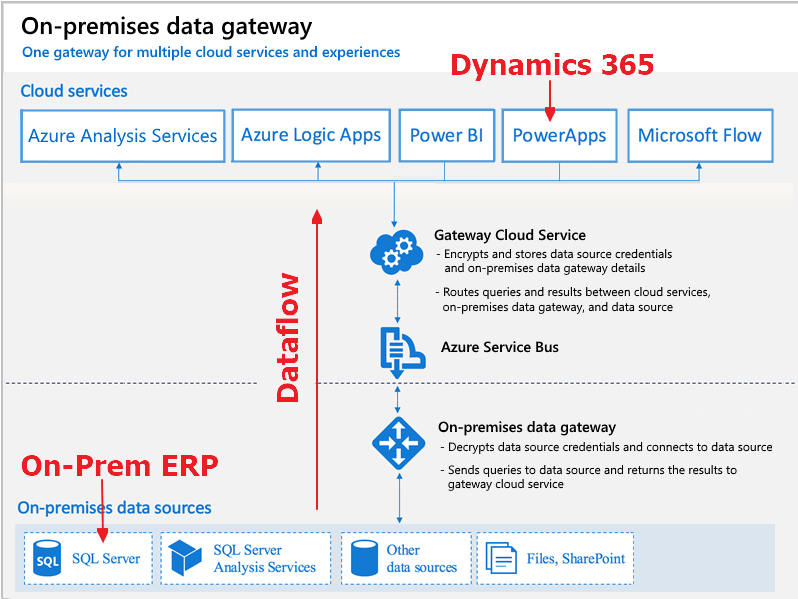

To start it off I downloaded a free tool called Lobe. www.lobe.ai . Microsoft acquired this tool recently, and it’s a great tool to learn more about object recognition in pictures. The really cool thing about the software is that calculations for the AI model are done on your local computer, so you don’t need to setup any Azure services to try out a model for recognition.

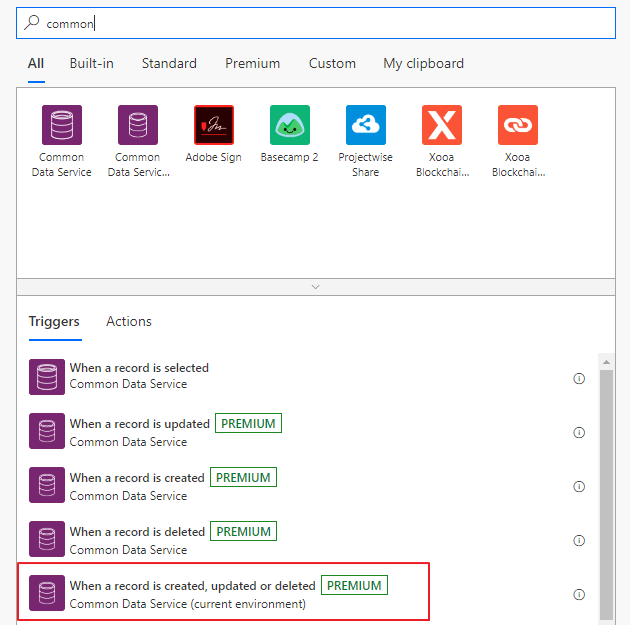

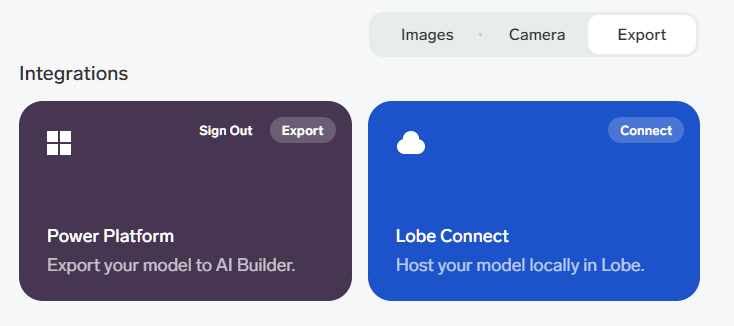

Another great feature is that it integrates seamlessly with Power Platform. Once you train you model with the correct data, you just export it to Power Platform!👏

The first thing you need to do is have enough pictures of the object. Just do at least 15 pictures from different angles to make it understand the object you want to detect.

Tagg all of the images with the correct tags.

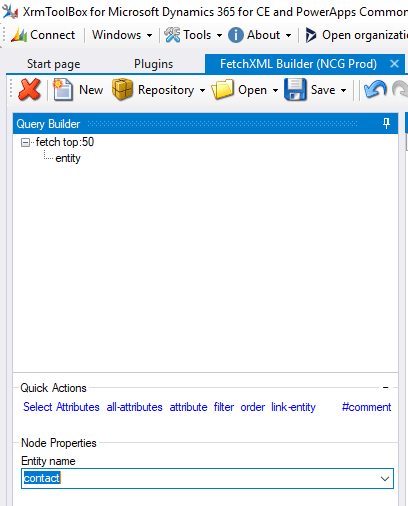

Next step is to train the model. This will be done using your local PC resources. When the training is complete you can export to Power Platform.

It’s actually that simple!!! This was really surprising to me:)

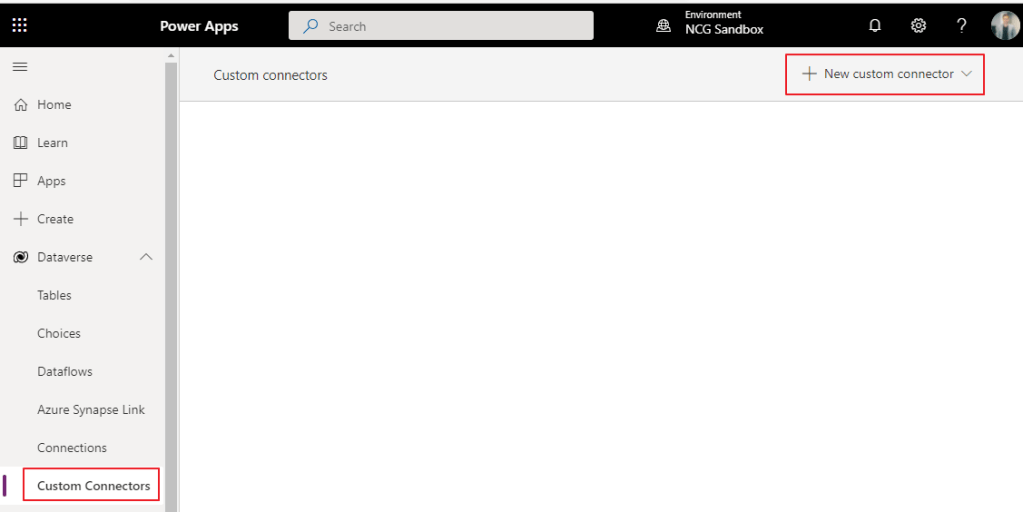

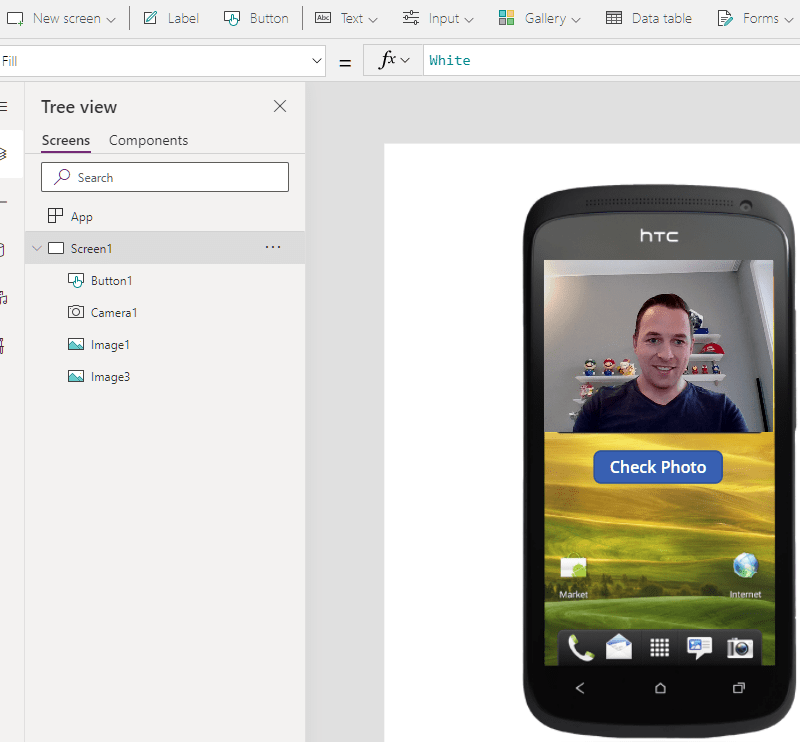

Power App

Next up was the Power App the citizens were going to use for the pictures. The idea of course that everyone had this app on their phones and licensing wasn’t an issue 😂

I just added a camera control, and used a button to call a Power Automate Cloud Flow, but this is also where the tricky parts began.

An image is not just an image!!!!! 😤🤦♂️🤦♀️

How on earth anyone is supposed to understand that you need to convert a picture you take, so that you can send it to Flow, only there to convert it to something else that then would make sense???!??!

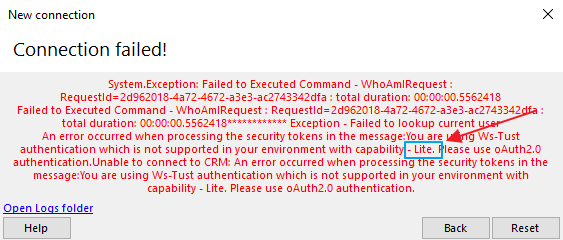

Image64 and Power Automate – What a shit show

After asking a few friends, and googling tons of different tips/trics I was able to make this line here work. I am sure there are other ways of doing this, but it’s not blatantly obvious to me.

Set(WebcamPhoto, Camera1.Photo);

Set(PictureFormat,Substitute(Substitute(JSON(WebcamPhoto,JSONFormat.IncludeBinaryData),"data:image/png;base64,",""),"""",""));

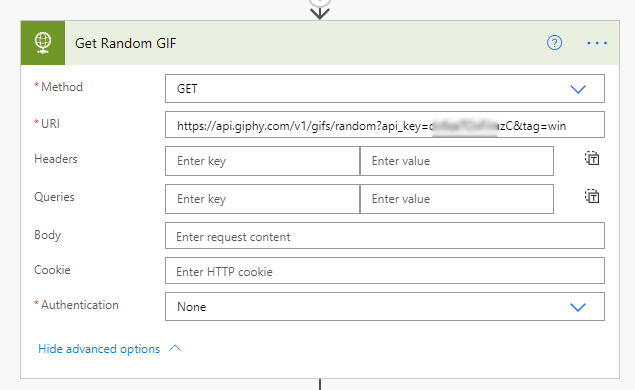

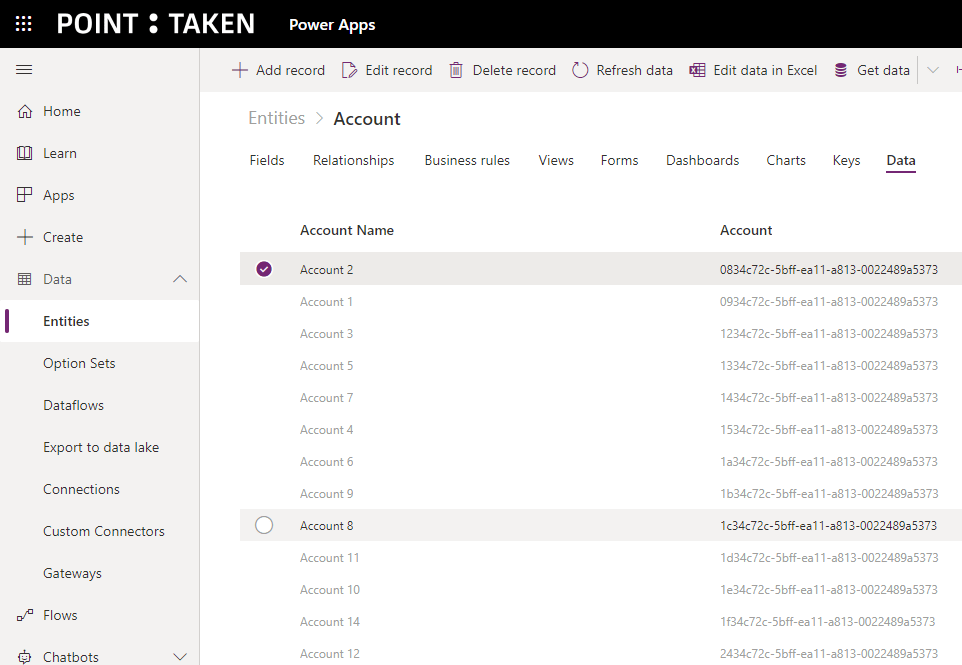

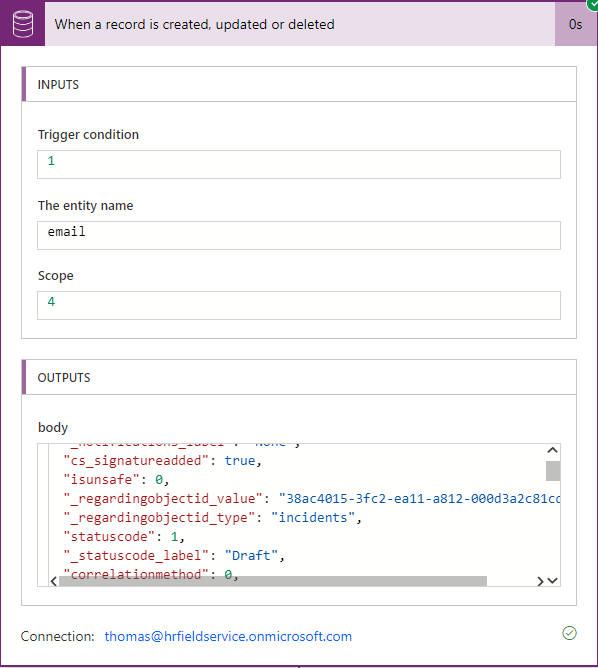

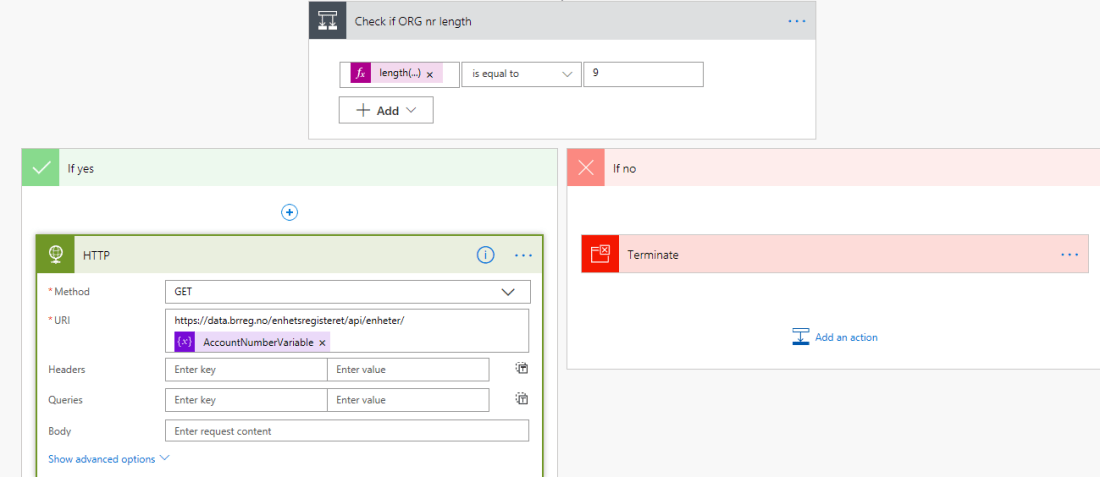

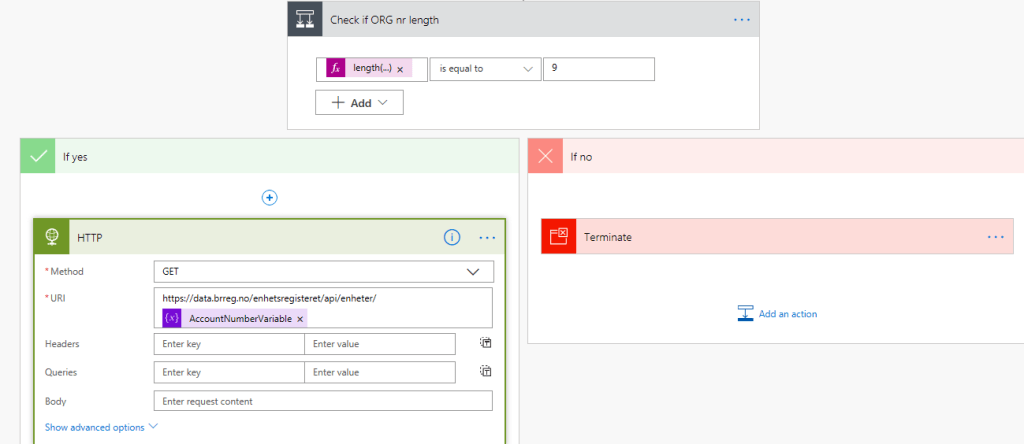

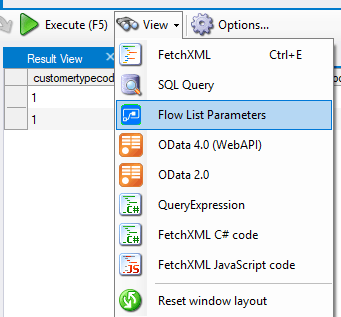

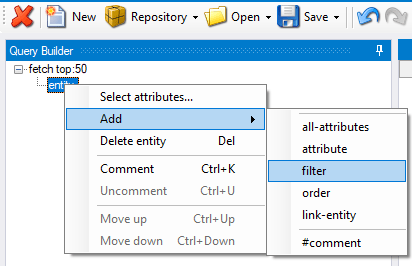

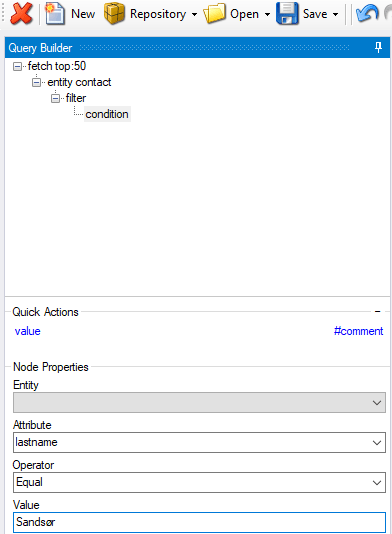

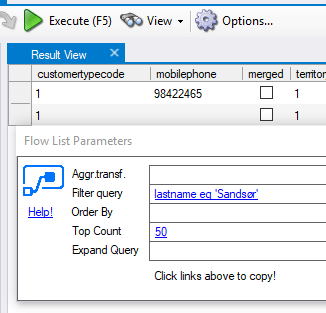

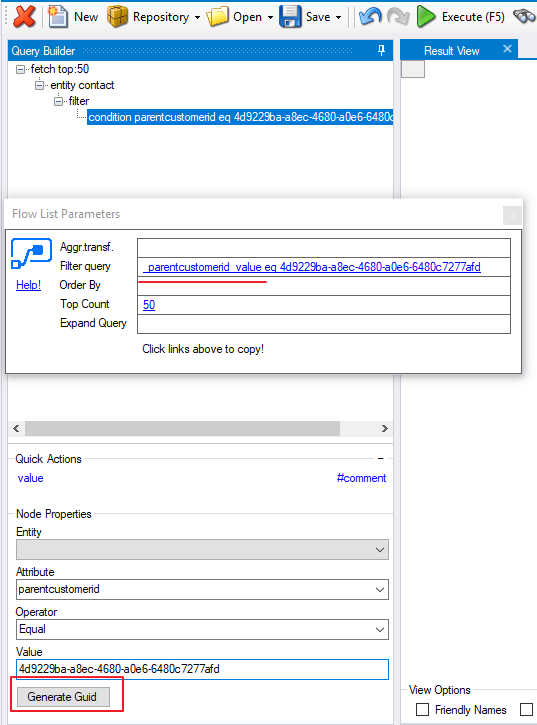

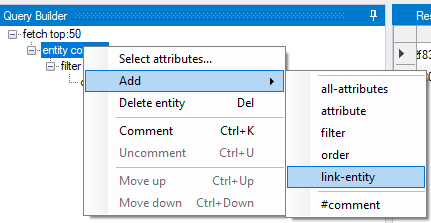

'PowerAppV2->Compose'.Run(PictureFormat);The receiving Power Automate Cloud Flow looked like this:

I tried receiving the image as a type image, but I couldn’t figure it out. Therefore I converted it to a Base64 I believe when sending to Flow. In the Flow I again converted this to a binary representation of the image before sending it to the prediction.

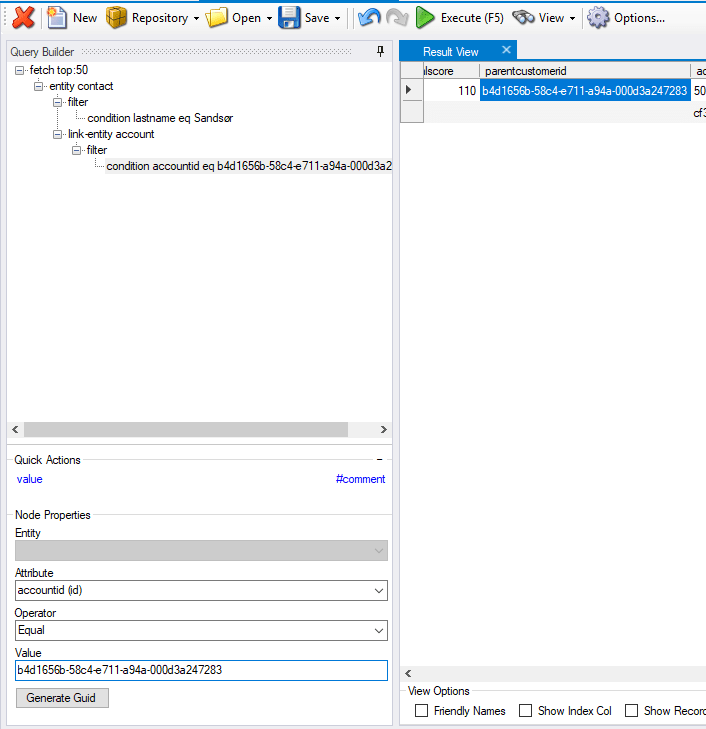

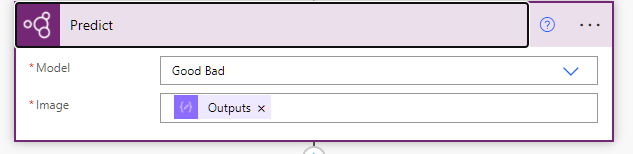

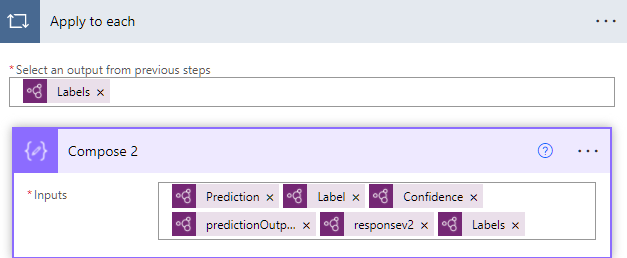

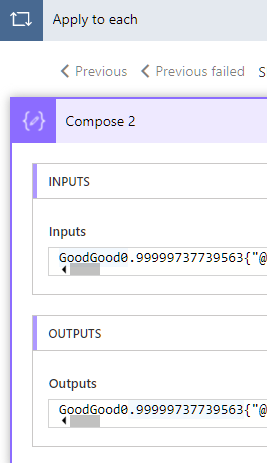

The prediction on the other hand worked out really nice!! I found my model that I had imported from Lobe, and used the ouputs from the Compose 3 action (base64 to binary). I have no idea what the differences are, but I just acknowledge that this is how it has to be done.. I am sure there are other ways, but that someone will have to teach me in the future.

All in all it actually worked really well. As you can see here I added all types of outputs to learn from the data, but it was exactly as expected when taking a picture of Winnie the Poo 😊 The bear was categorized as good, and my model was working.

Why Lobe?

One can wonder why I chose to use Lobe for this, when the AI Builder has the training functionality included within the Power Platform. For my simple case it wouldn’t really matter what I chose to use, I just wanted to test out the newest tech.

When working with larger scale (numbers) of images, Lobe seems to be a lot easier for the importing/tagging/training of the model. Everything runs locally, so the speed of training and testing is a lot faster also. It’s also simple to retrain the model an upload again. This being a hackathon it was important to try new things out 😊

More about AI builder

I talked to Joe Fernandez from the AI builder team, and he pointed me to some resources that are nice to checkout regarding this topic.

https://myignite.microsoft.com/sessions/a5da5404-6a25-4428-b4d0-9aba67076a08 <- forward to 11:50 for info regarding the AI Builder