For years, Microsoft has been selling the dream of Low-Code/No-Code: business users dragging boxes onto a Canvas, wiring up some Power Fx, and shipping “apps” without ever touching “real code.”

That era might be coming to an end.

If you’ve missed the recent announcements around Generative Pages in model-driven apps (docs here), let me put it bluntly:

👉 Microsoft is now letting you prompt your way into React code running within the Power Platform.

You read that right. Beneath the pretty maker studio UI, your app is no longer just a quirky YAML with formulas glued on top. It’s React. Real code. A pro developer’s framework.

And while you can’t directly manipulate the code today, let’s not kid ourselves. If the code exists in the backend, the next logical step is exposing it. At that point, “makers” stop being Low-Code hobbyists and start working in an environment where everything you build is by definition Pro-Code under the hood.

Why This Matters: Canvas Apps Are the Walking Dead

Canvas apps have been Microsoft’s big Low-Code poster child. Drag, drop, squint, hack together logic with Power Fx, and pray your delegation warnings don’t tank performance in production.

But ask yourself:

- Would you trust a mission-critical banking or healthcare process to a canvas built on top of spaghetti formulas?

- Would any professional developer inherit a Canvas App and thank you for it?

Exactly.

Microsoft knows this. And that’s why the shift is happening:

- From Low-Code hacks → to Pro-Code foundations.

- From business hobby projects → to enterprise-ready apps.

- From citizen dev “I made this” → to “Consultants and business can both work on this.”

Canvas apps won’t disappear overnight, but make no mistake: they are becoming the VHS tapes of Power Platform. Nostalgic, clunky, and doomed.

The Big Upside: A Community Ready to Evolve

This is actually fantastic news. Why? Because for the first time, Power Platform makers are building on top of technology that pro devs actually respect.

React is everywhere. Every dev shop hires React talent. Suddenly, those “weird Power Apps” in the corner of your business are future-proof:

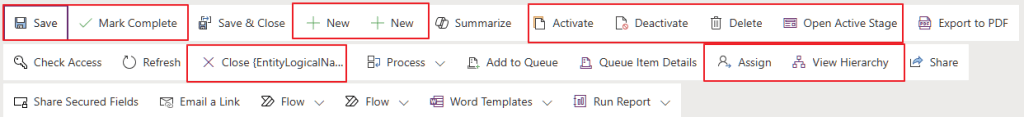

- Easier for pro devs to adapt. No more “what is Power Fx and why does it look like Excel’s evil twin?”

- Better ALM. With apps grounded in actual code, the days of Power Automate connection reference nightmares may finally fade away.

- Business-critical by design. If you want your Power Apps to run core processes, you want them running on real code—not a drag-and-drop toy.

The Provocative Take: Low-Code Isn’t “Dead”… It’s Reborn as Pro-Code

Let’s be clear: Low-Code isn’t disappearing. It’s transforming.

The promise of Low-Code (faster delivery, more accessible tooling, easier experimentation) will remain. But the foundation is shifting from fragile pseudo-code (Canvas + Power Fx + connectors duct-taped together) to robust, industry-standard codebases.

That’s the real revolution here:

- Business makers will still drag, drop, and prompt.

- The platform will compile those prompts into professional-grade code.

- Developers will finally have a sane way to extend, govern, and maintain those apps.

If you thought “fusion teams” were a buzzword before, wait until everyone is technically a React developer by default.

A Future Without Power Automate Nightmares?

Let me dream a little here. If Microsoft keeps doubling down on pro-code foundations in Power Platform, maybe—just maybe—we’ll finally:

- See the end of brittle Power Automate spaghetti flows.

- Eliminate connection reference chaos that breaks ALM pipelines.

- Build real software on Power Platform that lasts a lifetime.

The citizen dev name finally makes sense now. The “dev” part will actually be true 😀

We’re all pro coders now.

TL;DR

- Microsoft is quietly killing Low-Code as we know it.

- Canvas apps are already on life support.

- Generative Pages prove Power Platform is shifting toward real pro-code (React).

- This is a huge win for the community: easier ALM, pro dev collaboration, and apps that can actually be business critical.

👉 Low-Code isn’t dead. It just grew up.