Background

For years, I’ve had this itch:

What if I could build my own app experience on top of Dynamics 365 — something cleaner, faster, and more modern than a Model-Driven App?

Model-Driven Apps gave us structure.

Canvas Apps gave us freedom.

Power Pages brought external users into the mix.

But the truth?

Every time I looked over the fence at “actual code”, I would freeze and not have a single clue where to start….

This summer I opened VS Code, enabled GitHub Copilot, paired it with Claude, and suddenly… the digital barrier that had been there my whole life was gone🤯.

I didn’t magically become a full-stack dev — but AI gave me just enough superpowers to build what I used to only imagine.

So the next months I spent learning how to become a better prompter as this seems to be the key element to a good/bad AI Agent developer.

I wanted to experience that transition from the driver’s seat.

What

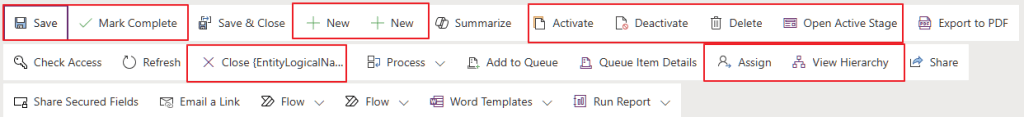

Let’s get this out of the way: I did everything wrong first.

I over-engineered front-end tech.

Played with services I had no business touching.

Tried Azure B2C.

Then B2B.

Failed authentication like a champion😆😆

Eventually, humility kicked in and I pivoted toward something more practical and more community friendly.

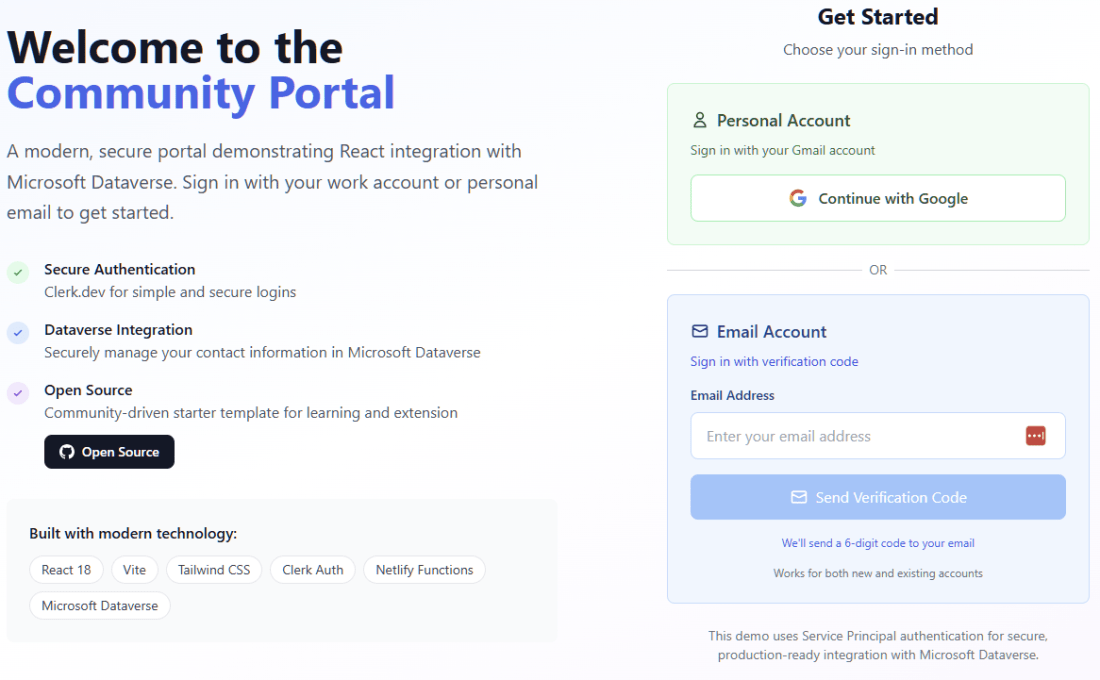

✅ Netlify for hosting the frontend

✅ Netlify Functions as my API + Auth proxy

✅ React, because Claude told me to

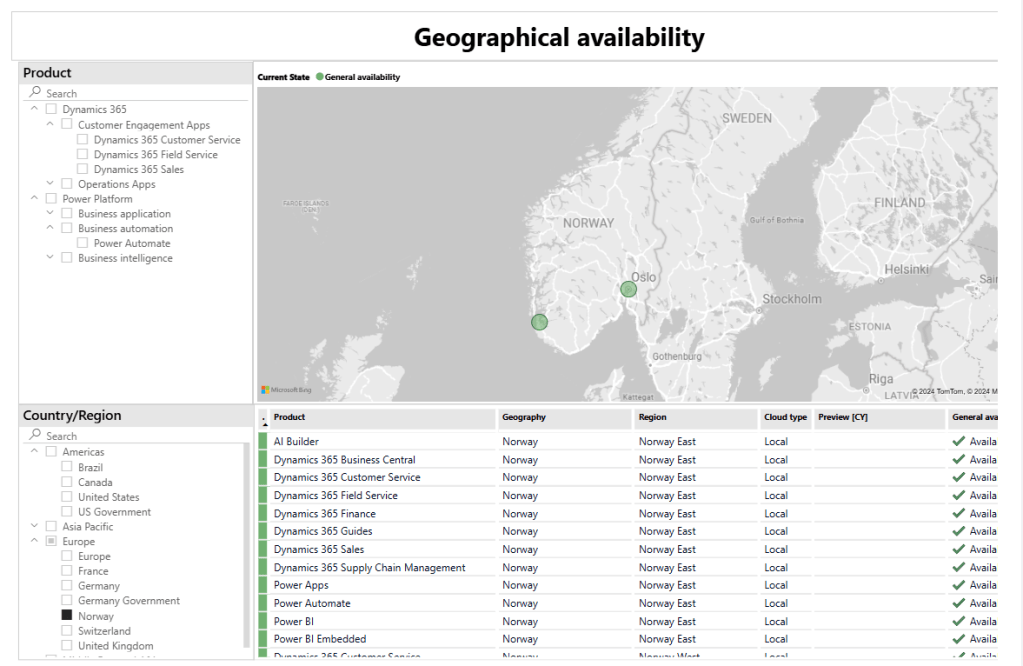

✅ Dataverse as my backend

✅ GitHub + Copilot + Claude as my “coding superbrain”

✅ Clerk.dev to handle identity

After weeks and weeks of late nights and swearing at the AI that seemed to never really understand what I actually was hoping to solve.. It finally worked👏👏.

- A portal.

- Not over-engineered.

- Just a clean, simple app that talks to Dynamics and feels… modern.

https://github.com/thomassandsor/CommunityPortal <- the project

Is it enterprise-ready?

🛑No🛑

Is it secure?

I sure hope so

Did it need to exist?

Probably not😆😆

But I made it.

And honestly, I love it💖💖💖💖

WHY?

I built this because I wanted to learn, not because the world needed “Thomas Portal v1”.

If I wanted speed (time to market), I would have stayed in Power Pages.

If I wanted stability, I would have stayed in Model-Driven land.

But I wanted to understand the future, and had to become a coder….. A coder with extreme backing of AI 😉

And here’s the real point:

The future of low-code isn’t “no code.”

It’s augmented code.

Makers will write code, guided by AI and that’s just something you need to get to terms with.

Microsoft is already opening up the AI generated code for editing, so it’s just a matter of time before the hybrid experience of real dev with AI dev working in “harmony”.

When these 2 worlds align, the difference between a maker and a developer becomes simply: curiosity and willingness to try.

Curiosity is the only qualification I had when I started this.

So now I’m sharing my journey not to show off, but to give others a trailhead.

If you’ve ever thought,

“Could I build something real outside of Dynamics/Power Platform”?

The answer is YES.

All you need is VS Code, some stubbornness, and an AI that believes in you more than your JavaScript error messages do.

Going forward

And now, with Microsoft’s latest announcement where Copilot can build apps and workflows from natural language prompts it’s even clearer:

Low-code isn’t dying.

It’s evolving into augmented coding