Click here to view all Plugins 4 Dummies Videos

There are many reasons why code is still very relevant. In this video, I share with you all of my thoughts on this particular topic.

Dynamics 365 Customer Engagement, CRM, Microsoft CRM, Dynamics CRM

Click here to view all Plugins 4 Dummies Videos

There are many reasons why code is still very relevant. In this video, I share with you all of my thoughts on this particular topic.

Did you recently see the update from Power CAT announcing the 3 dev environments pr user?!?! The topic of more than one dev environment has been discussed in several forums over the last years, but finally, it is here. Go check out the video on YouTube about the details:

In short a few key takeaways:

Or should I say https://make.preview.powerapps.com <- for now:) Depending on when it will be released to everyone, the ability to add environments from make.powerapps portal is not yet rolled out. Go to the PREVIEW make, and you should be able to see it there. This is important for users without access to the admin.powerplatform.com.

Go ahead and try it out and see where the limitations are. The important part here is to learn new things by trying them out. Enjoy!

Initially, I was just going to explore some possibilities with Custom Pages, but decided to make a solution out of it instead. This way you can just download the solution and do the required modifications for your project (GIPHY API key), and you are good to go😁

I am really excited to hear your feedback after seeing/trying this out, and hopefully, I will be able to make it a lot better in next revisions of the Custom Page!

Go to my GitHub to get a hold of the solution. It’s a pretty “thin” solution that should be easy to configure and easy to delete if you no longer need it.

💾 DOWNLOAD 💾

I have also included a video displaying how you install and use the solution

Because of ribbon changes, I was forced to create a managed solution for this. The only way it wouldn’t overwrite the current opportunity ribbon.

If you want to play around with the solution, download the unmanaged. Just make sure you don’t have any other ribbon customizations on Opportunity. If you do back it up first!!

For everyone else, you have to download the managed solution to be on the safe side.

On my Gihub page I have written what components get installed, so you can easily remove it all at a later point in time.

Did you think my last post was the final stage of the “Custom Page – Win Notification“? Of course not!! We have one more important step to complete the whole solution.

I am all about salespeople being able to boast about their sales. If you meet a salesperson who doesn’t love to brag about closing, are they even in sales? 😉

Communication is key when you work in organizations of any size and location Onprem/Online/Hybrid. Doesn’t matter where you work, we can all agree that spreading the word around, and making sure everyone gets the latest news is hard. Microsoft is making it pretty obvious that Teams is a preferred channel, so that’s where I also wanted to focus my energy.

I decided to extend the solution a bit to reuse the GIF that we got from the last closing screen, and include it in the Teams integration that I wrote about earlier in the Adaptive Cards.

The first thing I did was to add a new group of fields for the Teams notification. I wanted to let the user choose if they wanted to post the sales to Teams or not. The obvious reason is that some opportunities might have to be reopened, and you don’t want the credit 2 times etc. It’s just a simple logo, text and boolean field.

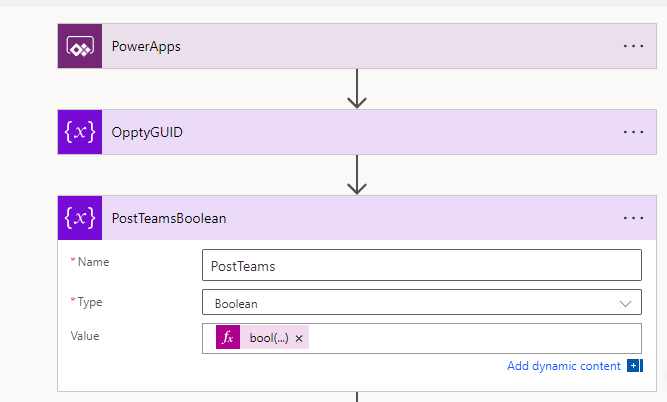

The next step is adding the variable BoolPostTeams to the run statement of the Power Automate when I click “Confirm Win”.

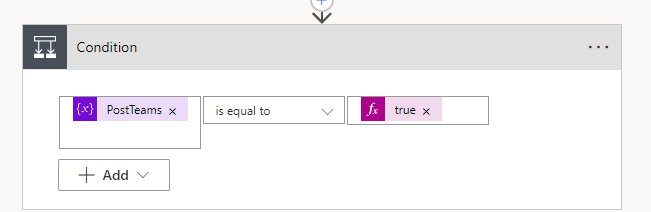

On the Flow side of things, I will now receive 1 more variable that I can handle. Because I wanted to use the bool type, I have to convert the string “true/false” to a boolean

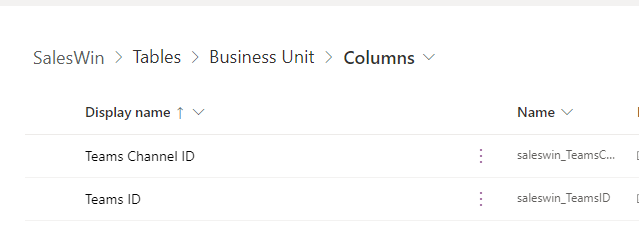

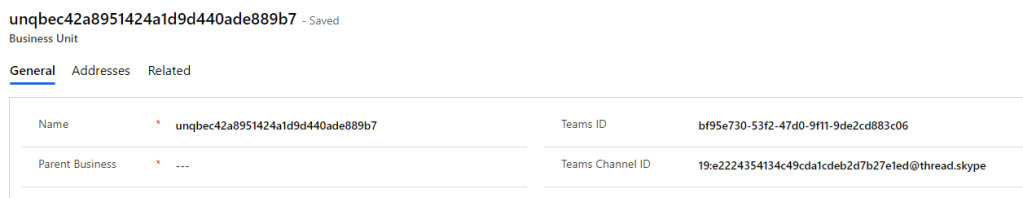

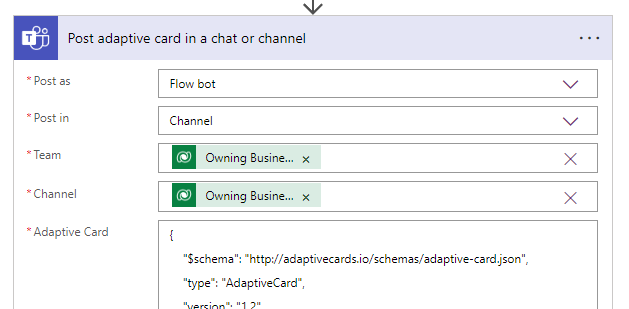

bool(triggerBody()['Initializevariable2_Value']) Glad you asked 😉 A part of the solution is to include a Team ID and Channel ID. I added these fields to the Business Unit Table. When we store the Teams/Channel ID’s at this level, we can reuse the same solution for several teams in an organization without having to hard code anything.

These are simple text fields that will hold the unique values to the Team and the Channel you want to notify.

NB! A tip for getting the Teams ID and the Channel ID:

Add the Team and Channel via the drop-down selector (flow step below). Then open the “peek code” to see what the ID’s of the teams are. If you don’t understand this step, just have a look at my video in the final post of the series 🎥

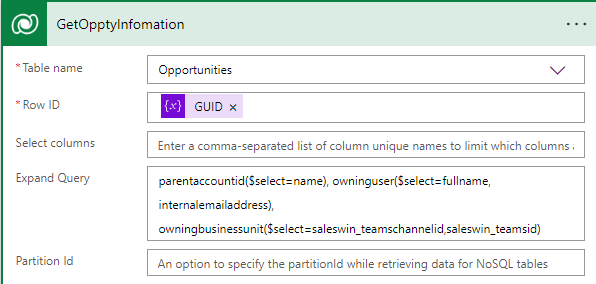

We update the get Opportunity with extra linked tables. This way we don’t have to do multiple retrieves, and only get the fields we need from the linked tables.

parentaccountid($select=name), owninguser($select=fullname, internalemailaddress), owningbusinessunit($select=saleswin_teamschannelid,saleswin_teamsid)After we update the opportunity as closed with our Custom Action “close opportunity”, we check if the close dialog wanted to notify the team.

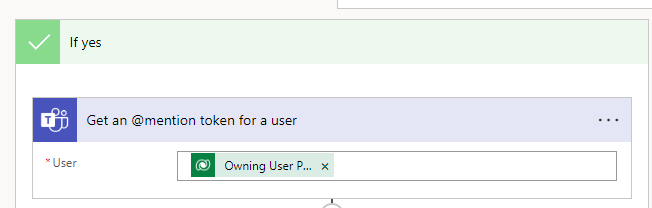

Then we need to get the @mention tag for the given user that made the sales. Here I am using the email address from the SystemUser table we are getting from the Opportunity extended table.

Finally, we post the Adaptive Card to Teams and behold the wonder of the adaptive card!! 💘

{

"$schema": "http://adaptivecards.io/schemas/adaptive-card.json",

"type": "AdaptiveCard",

"version": "1.2",

"body": [

{

"speak": "Sales Morale Boost",

"type": "ColumnSet",

"columns": [

{

"type": "Column",

"width": 8,

"items": [

{

"type": "TextBlock",

"text": "🚨WIN ALERT🚨",

"weight": "Bolder",

"size": "ExtraLarge",

"spacing": "None",

"wrap": true,

"horizontalAlignment": "Center",

"color": "Attention",

"fontType": "Default"

},

{

"type": "TextBlock",

"text": "@{outputs('GetOpptyInfomation')?['body/name']}",

"wrap": true,

"size": "Large",

"weight": "Bolder",

"horizontalAlignment": "Center"

},

{

"type": "ColumnSet",

"columns": [

{

"type": "Column",

"width": 25,

"items": [

{

"type": "TextBlock",

"text": "🏠 Kunde",

"wrap": true,

"size": "Large",

"weight": "Bolder",

"horizontalAlignment": "Right"

},

{

"type": "TextBlock",

"text": "💲 Verdi",

"wrap": true,

"size": "Large",

"weight": "Bolder",

"horizontalAlignment": "Right"

},

{

"type": "TextBlock",

"text": "👩🦲 Selger",

"wrap": true,

"size": "Large",

"weight": "Bolder",

"horizontalAlignment": "Right"

}

]

},

{

"type": "Column",

"width": 50,

"items": [

{

"type": "TextBlock",

"text": "@{outputs('GetOpptyInfomation')?['body/parentaccountid/name']}",

"size": "Large",

"weight": "Bolder"

},

{

"type": "TextBlock",

"text": "@{outputs('GetOpptyInfomation')?['body/actualvalue']}",

"weight": "Bolder",

"size": "Large"

},

{

"type": "TextBlock",

"text": "@{outputs('Get_an_@mention_token_for_a_user')?['body/atMention']}",

"weight": "Bolder",

"size": "Large"

}

]

}

]

}

]

}

]

},

{

"type": "Image",

"url": "@{body('Parse_JSON')?['data']?['images']?['original']?['url']}",

"horizontalAlignment": "Center"

}

]

}

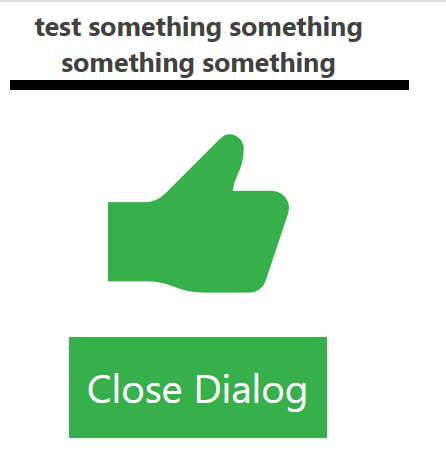

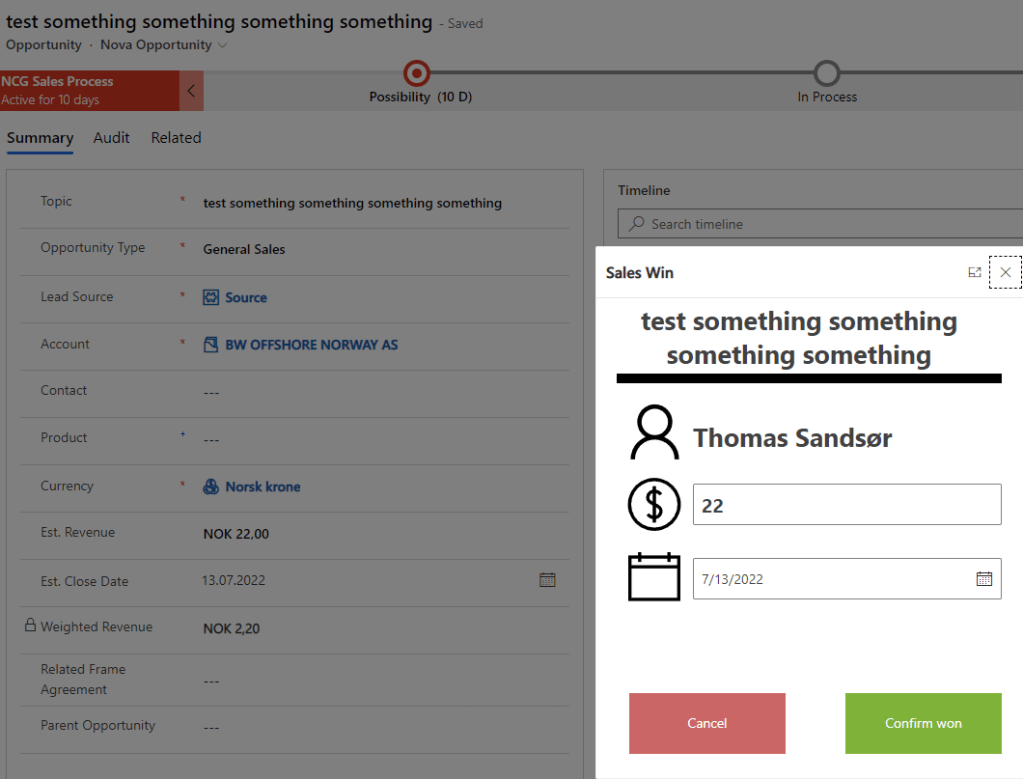

Now, this is what an opportunity close dialog should look like!! 🙏🏆🌟🎉

Most of you might know by now that I am a huge fan of GIF’s. I have written about GIF’s earlier when trying to motivate sales people, and I thought I would turn it up one notch!

Last post I wrote was about the opportunity close dialog. It was functional, but not exciting.

It is still 100% more feedback friendly than the Microsoft OOTB functionality, but it’s a little boring now that we have the option to be creative. This is why I thought I would add my earlier GIF post together with this to create a solution for instant response to the salespeople.

We all know that there is no better feeling than finding the correct GIF! 😂

So let’s start off by including a flow that I have written earlier with the Custom Page Confirmation dialog to make it all a little better than the thumbs up that my last post was showcasing.

In the last post we covered the Power Automate action when the confirmation button was pressed. Now we reopen it to add a few more steps. The steps are identical to the steps I have linked to above in the article that coveres GIF connector.

First let’s query for a WIN gif.

Go to Giphy and setup a developer account for free. I have written about it in the GIF post that I have on top here. Just replace the api_key value in the string:

https://api.giphy.com/v1/gifs/random?api_key=**********&tag=win

Parse the return of this query (SORRY.. This is a bit long!)

{

"type": "object",

"properties": {

"data": {

"type": "object",

"properties": {

"type": {

"type": "string"

},

"id": {

"type": "string"

},

"url": {

"type": "string"

},

"slug": {

"type": "string"

},

"bitly_gif_url": {

"type": "string"

},

"bitly_url": {

"type": "string"

},

"embed_url": {

"type": "string"

},

"username": {

"type": "string"

},

"source": {

"type": "string"

},

"title": {

"type": "string"

},

"rating": {

"type": "string"

},

"content_url": {

"type": "string"

},

"source_tld": {

"type": "string"

},

"source_post_url": {

"type": "string"

},

"is_sticker": {

"type": "integer"

},

"import_datetime": {

"type": "string"

},

"trending_datetime": {

"type": "string"

},

"images": {

"type": "object",

"properties": {

"downsized_large": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_height_small_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"original": {

"type": "object",

"properties": {

"frames": {

"type": "string"

},

"hash": {

"type": "string"

},

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_height_downsampled": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"downsized_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_height_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"downsized_medium": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"downsized": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"preview_webp": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"original_mp4": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_height_small": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_height": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"downsized_small": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"preview": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_width_downsampled": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_width_small_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_width_small": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"original_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"fixed_width_still": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"looping": {

"type": "object",

"properties": {

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

}

}

},

"fixed_width": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"mp4": {

"type": "string"

},

"mp4_size": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"webp": {

"type": "string"

},

"webp_size": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"preview_gif": {

"type": "object",

"properties": {

"height": {

"type": "string"

},

"size": {

"type": "string"

},

"url": {

"type": "string"

},

"width": {

"type": "string"

}

}

},

"480w_still": {

"type": "object",

"properties": {

"url": {

"type": "string"

},

"width": {

"type": "string"

},

"height": {

"type": "string"

}

}

}

}

},

"user": {

"type": "object",

"properties": {

"avatar_url": {

"type": "string"

},

"banner_image": {

"type": "string"

},

"banner_url": {

"type": "string"

},

"profile_url": {

"type": "string"

},

"username": {

"type": "string"

},

"display_name": {

"type": "string"

},

"description": {

"type": "string"

},

"is_verified": {

"type": "boolean"

},

"website_url": {

"type": "string"

},

"instagram_url": {

"type": "string"

}

}

}

}

},

"meta": {

"type": "object",

"properties": {

"msg": {

"type": "string"

},

"status": {

"type": "integer"

},

"response_id": {

"type": "string"

}

}

}

}

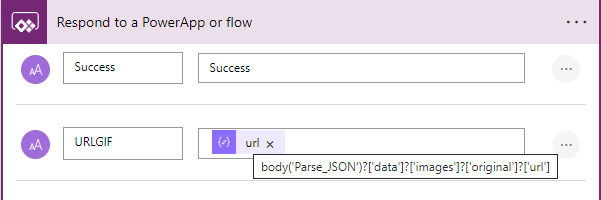

}At the end we have to respond to the app with the GIF URL that we want to use. There are many variables that have the same name “URL”, so make sure you find the one that I am using below.

The “Confirm Win” button now has to do a few more things, so here is the added code.

//Patch the Oppty fields

Patch(

Opportunities,

LookUp(

Opportunities,

Opportunity = GUID(VarOppportunity.opportunityid)

),

{

'Actual Close Date': EstClosingDate.Value,

'Actual Revenue': Int(EstimatedRevenue.Value)

}

);

//Update Opportunity Close entity and retreive GIF

Set(varFlow, CloseOpptyPostTeams.Run(VarOppportunity.Opportunity));

Set(varGIF, varFlow.urlgif);

//Hide input boxes and show confirmation

Set(

varConfirmdetails,

false

);

Set(

varCongratulations,

true

);Mostly the only difference here is adding 2 variables. 1 that is the actual response from Power Automate (all of the fields), and the other one is setting a variable “varGIF” for use in our new image component that we add on the sales confirmation box.

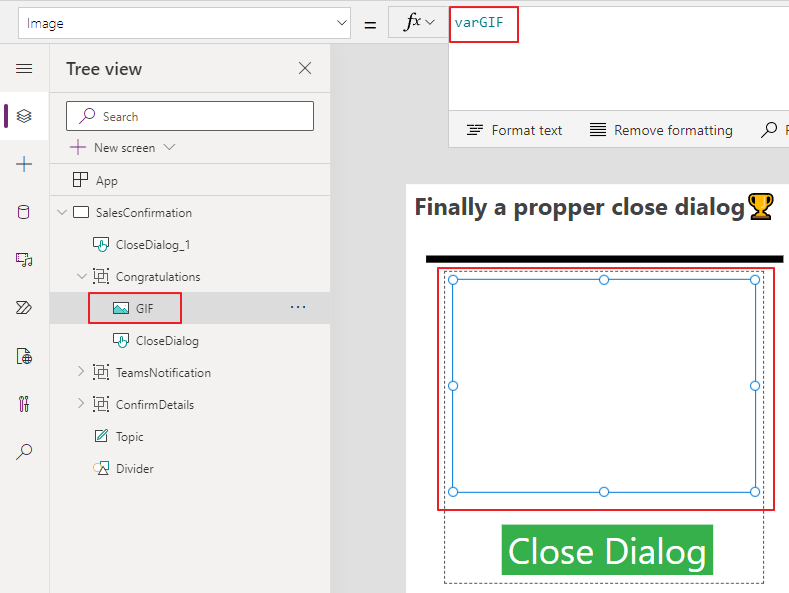

Add an image to the Congratulations group, and reference the varGIF

When the sales person now closes the dialog, they get a 100% more awesome experience than the OOTB version!!! 🏆👍🎉

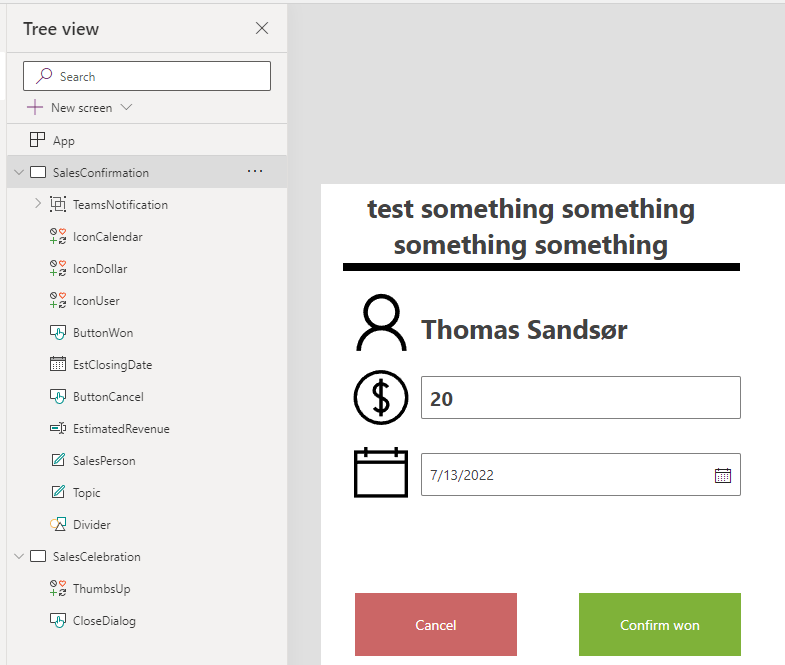

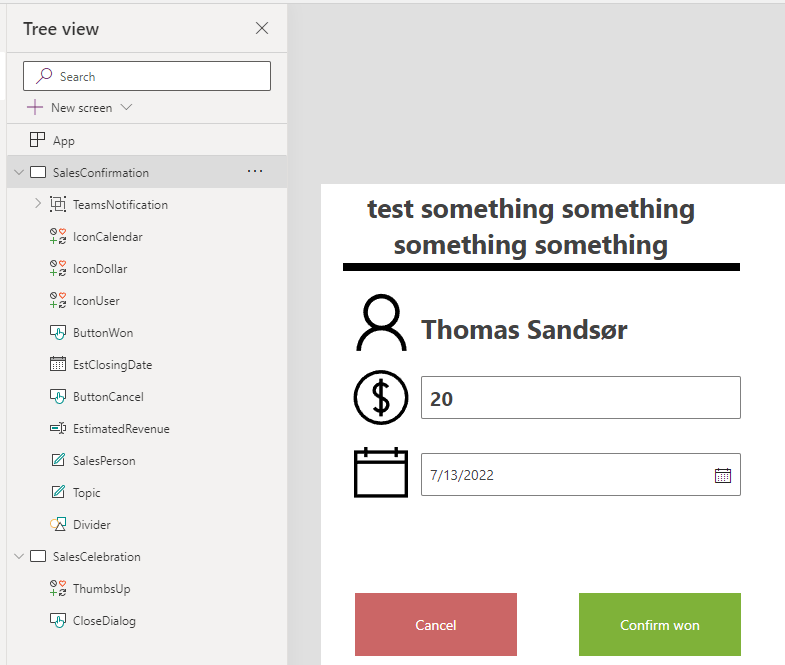

In the last post, we created a new close dialog, but we didn’t add any logic to the buttons.

The most important parameter we send in via JavaScript last time was the GUID of the record that we are going to work with.

The first thing we do is add an onload to the app and perform a lookup as the very first step. This will give us all of the data for that given Opportunity that we can use within the Power App. We store the whole record in a variable “varOpportunity”.

A little clever step here is actually the “First(Opportunities)”. For testing purposes, this will open up the first Opportunity in the DB if you open the app without the GUID from Dynamics, and from here you can test the app make.powerapps.com studio without having to pass a parameter to the Custom Page 👍

ONLOAD

Set(VarOppportunity,

If(IsBlank(Param("recordId")),

First(Opportunities),

LookUp(Opportunities, Opportunity = GUID(Param("recordId"))))

)Fields

Fields can now be added via the “varOpportunity” that contains all of the data to the first opportunity in the system.

BUTTONS

The cancel button only has “back()” as a function to close out the dialog, but the “Confirm WIN” has a patch statement for Opportunity.

//Patch the Opportunity fields

Patch(

Opportunities,

LookUp(

Opportunities,

Opportunity = GUID(VarOppportunity.opportunityid)

),

{

'Actual Close Date': EstClosingDate.Value,

'Actual Revenue': Int(EstimatedRevenue.Value)

}

);

//Hide input boxes and show confirmation

Set(

varConfirmdetails,

false

);

Set(

varCongratulations,

true

);HIDE/SHOW

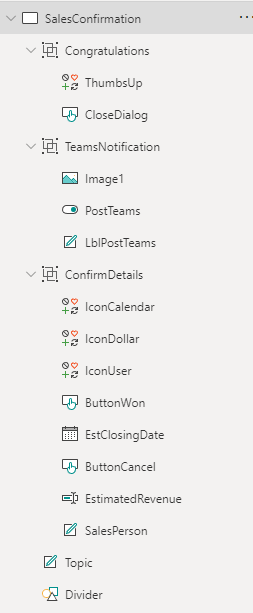

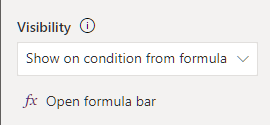

Because of some challenges I met with multiple screens, I had to use a single Screen with hide/show logic. Therefore I added all the fields to Groups and will hide Show based on groups.

The Congratulations group looks like this.

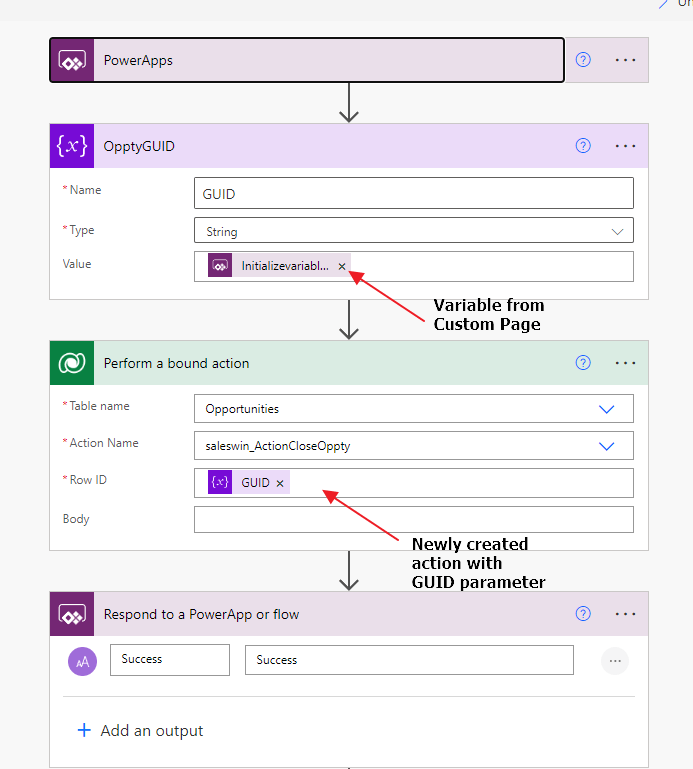

If this were a custom entity we could close the opportunity by setting the Status and Status Reason values. Unfortunately, the Opportunity has a function for closing the Opportunity that will create a Case close dialog. In order for this to work, we have to call a custom service for closing the Case. This does get a bit tricky.

We now have to call an action from Power Automate to close the opportunity as WON. At the moment of writing the blog, the process of calling the Microsoft action in Power Automate wasn’t working, so I created my own action. I will show you how, and honestly maybe even recommend doing it this way for now. It works all of the time and uses the technology that has been working in CRM since 4.0.

Actions work with the same logic as a Workflow, but they can be fired at any time from anywhere. They can receive inputs, and generate outputs. A workflow will only trigger from CRUD events, and work within the context of the record triggering the actual workflow. They are in many ways an underrated function in Dynamics / Dataverse.

It’s a pretty simple step updating the status of the opportunity to “won”, and by doing it this way the system will automatically do the correct calls in the API for Opportunity Close.

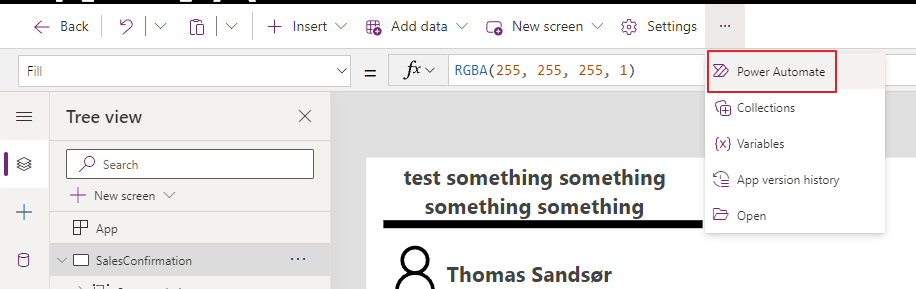

This is all you need for the action. After activation, we can go back to the custom page and create a instant flow (Power Automate).

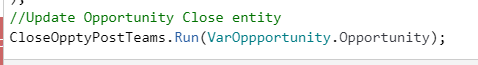

In the Custom Page we now add a line to our “Confirm WIN” button. (Yes, I know we probably should add some logic for success/fail, but that will be a part of the final solution on Github).

//Patch the Opportunity fields

Patch(

Opportunities,

LookUp(

Opportunities,

Opportunity = GUID(VarOppportunity.opportunityid)

),

{

'Actual Close Date': EstClosingDate.Value,

'Actual Revenue': Int(EstimatedRevenue.Value)

}

);

//Hide input boxes and show confirmation

Set(

varConfirmdetails,

false

);

Set(

varCongratulations,

true

);

//Update Opportunity Close entity

CloseOpptyPostTeams.Run(VarOppportunity.Opportunity);

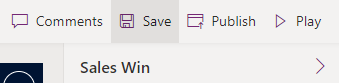

You should now be able to close the opportunity as won via a custom page. Just remember to publish the custom page AND publish the app again. If not it will now show. Do remember to give it a few moments before refreshing after a change.

I am using Dynamics 365 as an example, but the process will be the same for Power Apps – Model Driven. Only difference is that Dynamics 365 has the Opportunity table that we are wanting to use.

If you want to learn more about Custom pages I suggest you look at the following posts:

Scott Durrow

Lisa Crosby

MCJ

Microsoft Custom Pages

I have also added the simple button setup to Github, if you just want to give it a go.

👉DOWNLOAD SIMPLE BUTTON HERE👈

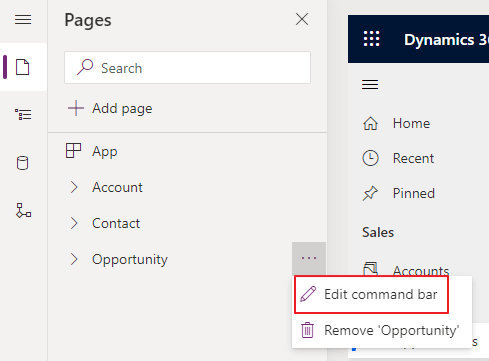

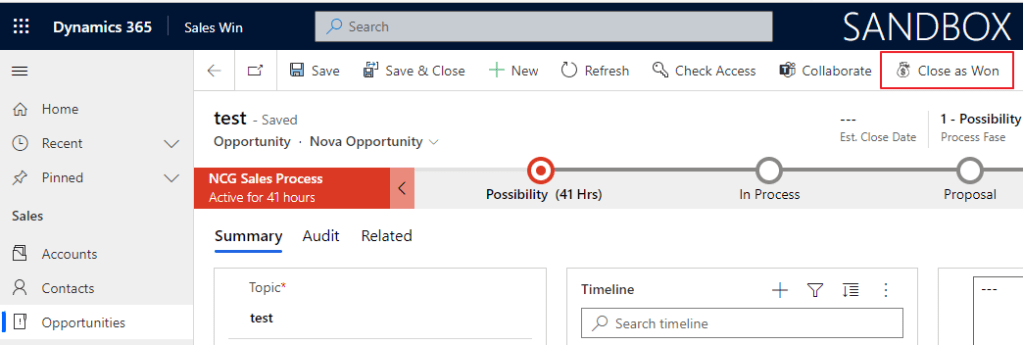

Start off by adding an app to a solution and giving it a proper name. In my case, I am choosing Sales Win, because I am creating a simple app for everyone to see the functionality. You could of course just add this to your existing app if that is what you want to do.

For the tricky part, you need to open the Command Bar. This is essentially Ribbon Workbench jr😉 In time I would only imagine that most of the Ribbon Workbench would be available here, but we are probably still a few years away from a complete transition here.

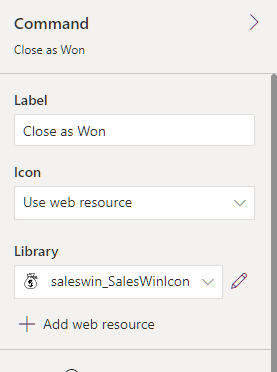

Choose the main form for this exercise, and then create a new Command. Command is actually a button. Why it’s called Command I’m not sure as we all are used to add buttons to a ribbon🤷♂️

On the right side, you can add an image, and I usually find mine through SVG’s online.

SVG’s for download <- a blog post I wrote about the topic. Add this as a webresource.

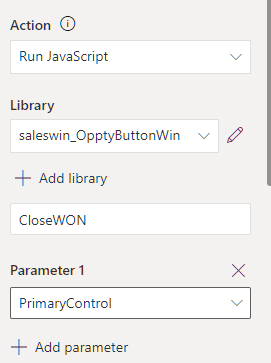

Now, we are going to have to create some JavaScript. It’s actually the only way to open a Custom Page. The JavaScript is pretty “simple”, and I will provide you with the copy-paste version.

🛑BEFORE YOU STOP READING BECAUSE OF JAVASCRIPT🛑

I will provide the JavaScript you need. Unfortunately, you will still need to understand how to copy-paste some script for stuff like custom pages to work.

The only parameter you have to change here if you are creating everything from scratch is:

name: “saleswin_saleswin_c0947” <- replace with the name of your custom page.

function CloseWON(formContext) {

//Get Opportunity GUID and remove {}

var recordGUID = formContext.data.entity.getId().replace(/[{}]/g, "");

// Centered Dialog

var pageInput = {

pageType: "custom",

name: "saleswin_saleswin_c0947", //Unique name of Custom page

entityName: "opportunity",

recordId: recordGUID,

};

var navigationOptions = {

target: 2,

position: 1,

width: {value: 450, unit: "px"},

height:{value: 550, unit: "px"}

};

Xrm.Navigation.navigateTo(pageInput, navigationOptions)

.then(

function () {

// Called when the dialog closes

formContext.data.refresh();

}

).catch(

function (error) {

// Handle error

alert("CANCEL");

}

);

}

This JavaScript opens up a custom page and sends in a GUID parameter (look at PageInput) to use within the page. This is just how Custom Pages work, so don’t try to do any magic here!

Other examples of how to load a custom page:

Microsoft Docs Custom Page

It’s important to hide or show the button at the correct times, and that is why you have to add logic. With the following function, the button is only visible when the Opportunity is in edit mode. When creating a new Opportunity you will not see this button.

Self.Selected.State = FormMode.Edit

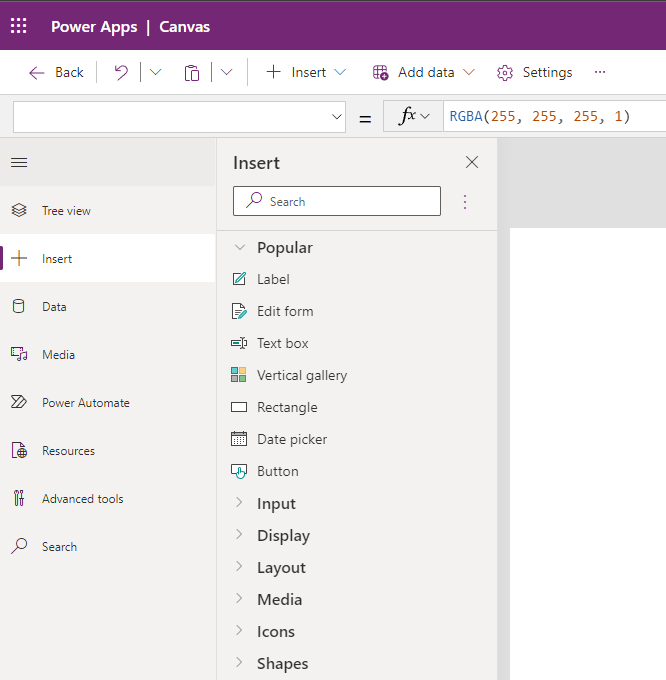

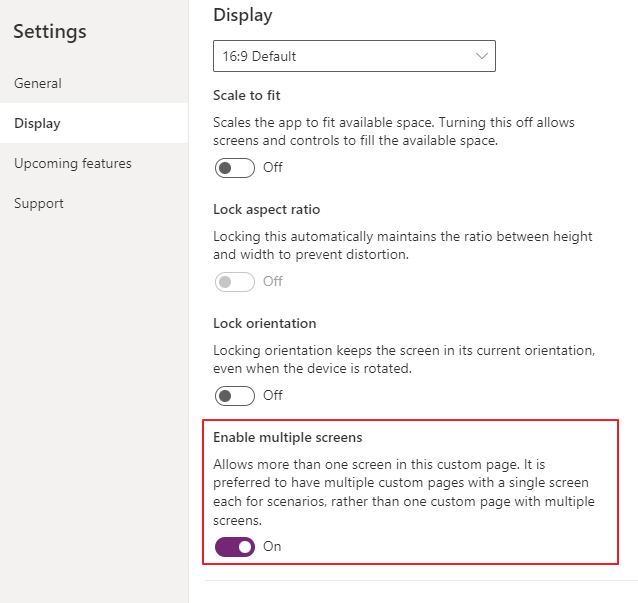

A custom page is a lot like a Canvas App, but it’s not exactly the same. Not all functionality is the same as a Canvas App, so you need to get familiar with it first.

The first thing I noticed was the lack of multiple screens.

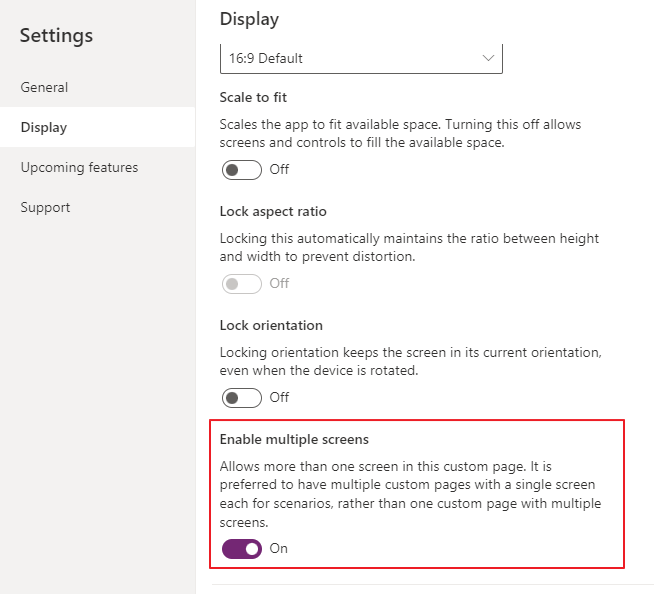

It seems not impossible to add more screens, but Microsoft has hidden this feature. The reason seems to be related to isolating a Screen to be a specific application. Might make sense, but not for my use case. It does make somewhat sense because a Custom Page can be opened from everywhere within Model Driven Apps. It’s actually not tied to anything (entity) at all. If you need to turn it on: 👇

Please don’t judge this Canvas App ATM. It will only get better over time 😂

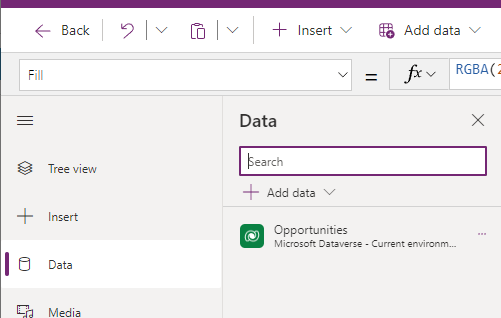

I added labels and text boxes without any logic to them yet and added a data source for Opportunities. This is all for the next step of our configuration of the Sales Win dialog.

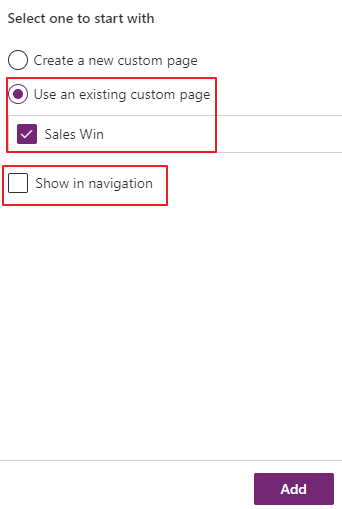

We now need to add the custom page to the APP.

Make sure you UNCHECK the “Show in navigation”. Otherwise, you will see this page as a navigation option on the left side like account, contact, oppty etc.

Finally, publish in the ribbon editor

Once the button is pushed, the Custom Page loads. 🙏👍🎉

Custom pages can only have one screen… Right? No, actually they can have multiple screens like a normal Canvas App

I was a bit surprised when learning this because I have been told that there only was one screen per Custom Page. Turns out that Microsoft only recommends one screen pr Custom Page, because they want to isolate the pages better, and rather navigate between custom pages.

In some cases, you actually just want a simple screen instead of a new Custom Page.

This might be old news for many, but I for one did not notice this before recently🤷♂️

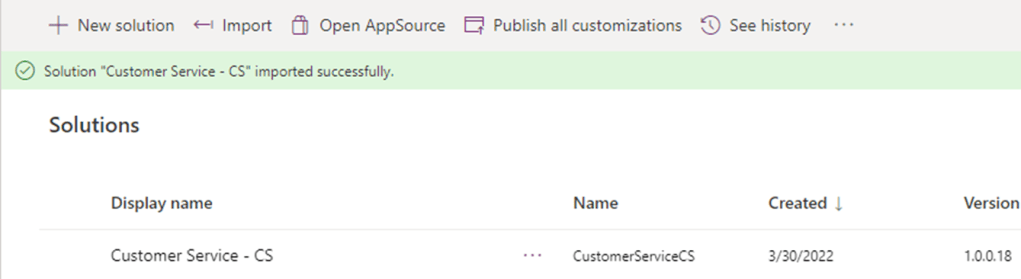

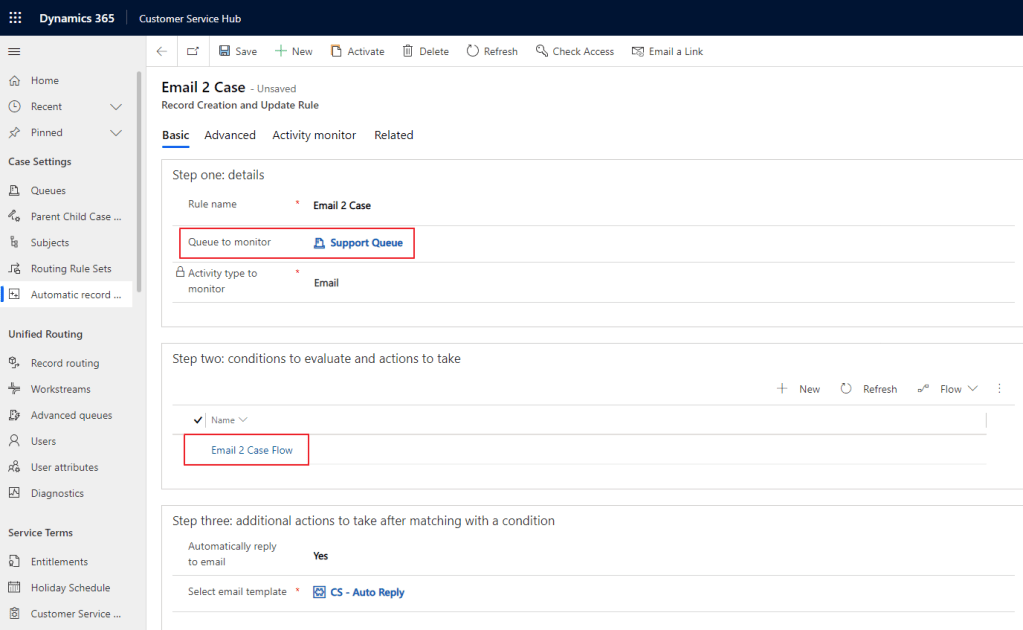

A while back I released a Customer Service solution to get a DEMO or Simple production system up and running withing an hour.

Due to recent updates to email to case and templates, the solution I had created failed every time on installation. After a few weeks with Microsoft Support we sorted it out, and the solution is back working again!😀😀🙏🎉🎉🥂🍾

Remember to get the latest version (18 or above) of solution from the GitHub folder.

The main change in the setup is the email to case is now a part of Power Automate, and no longer a part of our good friend WorkFlow

I wrote about it in my blog a while back how to create Email -> Case the new way

After importing the solution you will now find “Email 2 Case” in the Automatic Record Creation area. Open this via the Customer Service HUB

Make sure you select your Queue that you have added earlier ADD QUEUE

Open the Email 2 Case Flow to see the structure that Microsoft now has create for Email to Case.

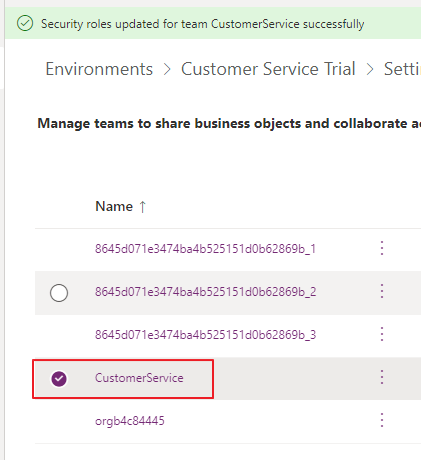

If you like adding the Team as owner to the cases (Optional), you have to add this line to the Owner field in the Flow.

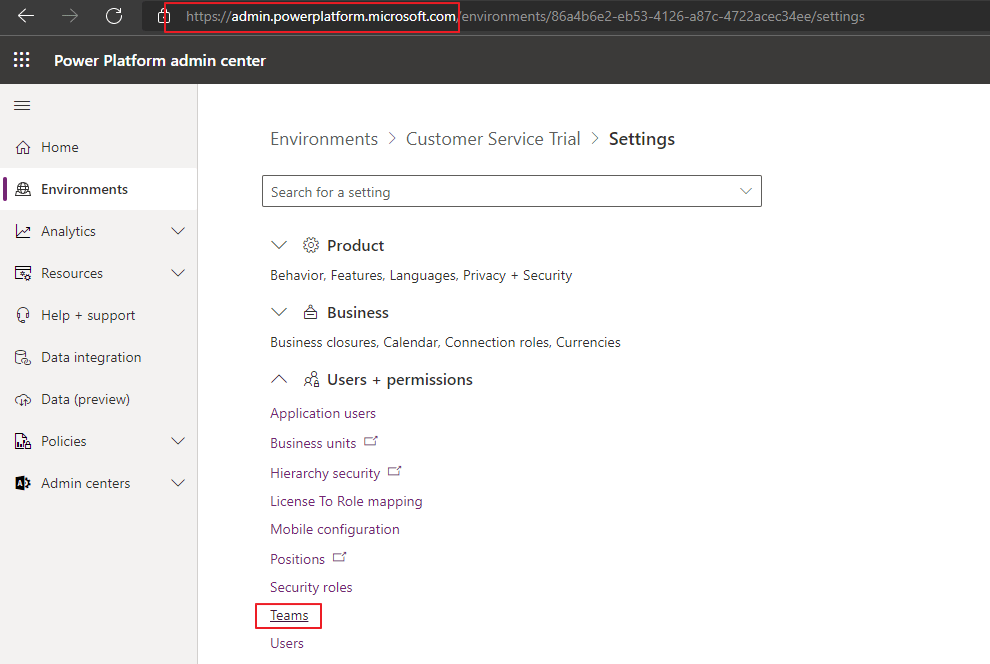

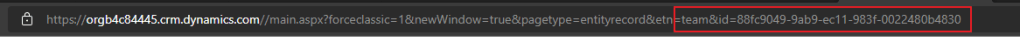

NB! You have to retrieve the GUID from the Team in CRM.

Last step is to Activate the email to case record creation.. At this point you should be able to see emails entering CRM via Cases.

During the recent Arctic Cloud Developer Challenge Hackathon I was playing around with AI Builder for the first time. The scenario we were going to build on there was the detection of Good guy / Bad guy.

The idea was that the citizens would be able to take pictures of suspicious behavior. Once the picture was taken, the classification of the picture would let them know if it was safe or not.

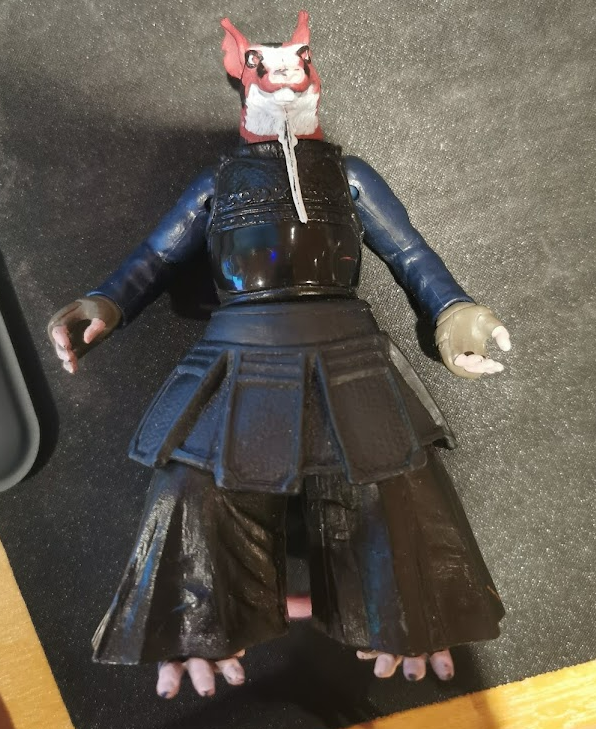

For this exercise I would use my trusted BadBoy/GoodBoy nephew toys:)

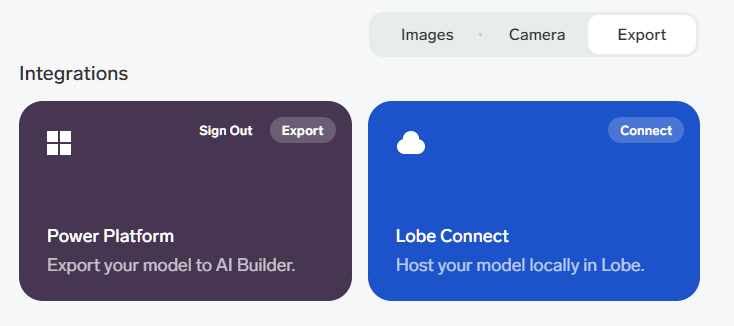

To start it off I downloaded a free tool called Lobe. www.lobe.ai . Microsoft acquired this tool recently, and it’s a great tool to learn more about object recognition in pictures. The really cool thing about the software is that calculations for the AI model are done on your local computer, so you don’t need to setup any Azure services to try out a model for recognition.

Another great feature is that it integrates seamlessly with Power Platform. Once you train you model with the correct data, you just export it to Power Platform!👏

The first thing you need to do is have enough pictures of the object. Just do at least 15 pictures from different angles to make it understand the object you want to detect.

Tagg all of the images with the correct tags.

Next step is to train the model. This will be done using your local PC resources. When the training is complete you can export to Power Platform.

It’s actually that simple!!! This was really surprising to me:)

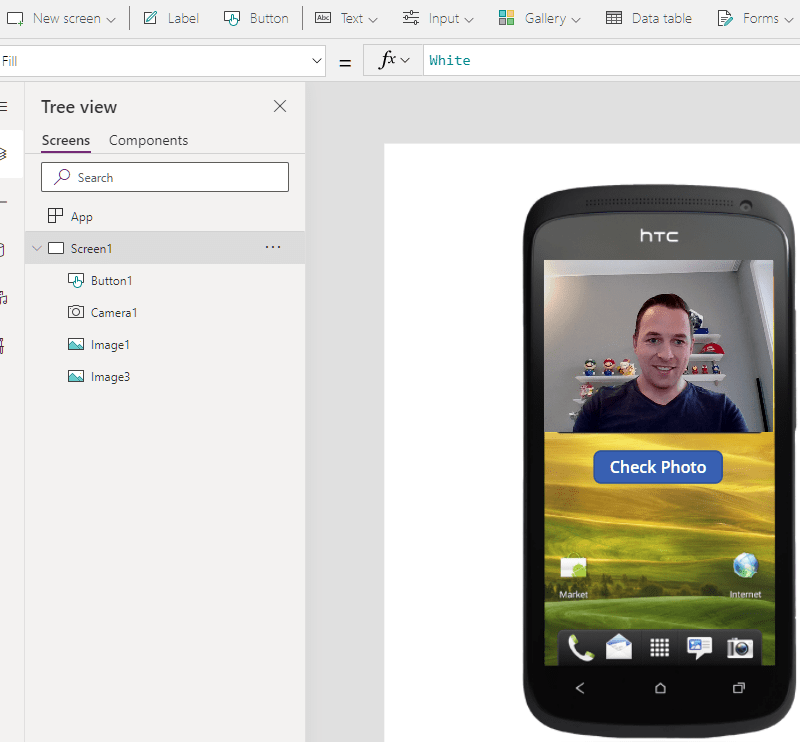

Next up was the Power App the citizens were going to use for the pictures. The idea of course that everyone had this app on their phones and licensing wasn’t an issue 😂

I just added a camera control, and used a button to call a Power Automate Cloud Flow, but this is also where the tricky parts began.

An image is not just an image!!!!! 😤🤦♂️🤦♀️

How on earth anyone is supposed to understand that you need to convert a picture you take, so that you can send it to Flow, only there to convert it to something else that then would make sense???!??!

After asking a few friends, and googling tons of different tips/trics I was able to make this line here work. I am sure there are other ways of doing this, but it’s not blatantly obvious to me.

Set(WebcamPhoto, Camera1.Photo);

Set(PictureFormat,Substitute(Substitute(JSON(WebcamPhoto,JSONFormat.IncludeBinaryData),"data:image/png;base64,",""),"""",""));

'PowerAppV2->Compose'.Run(PictureFormat);The receiving Power Automate Cloud Flow looked like this:

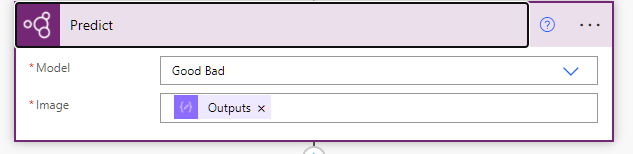

I tried receiving the image as a type image, but I couldn’t figure it out. Therefore I converted it to a Base64 I believe when sending to Flow. In the Flow I again converted this to a binary representation of the image before sending it to the prediction.

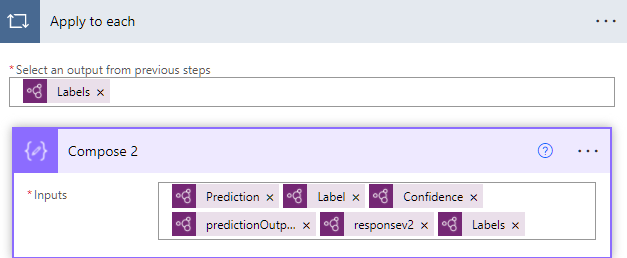

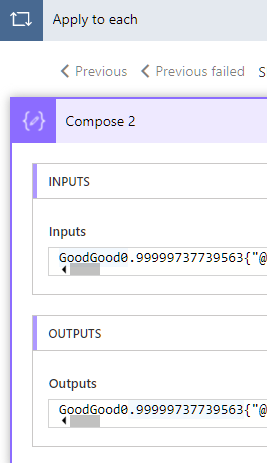

The prediction on the other hand worked out really nice!! I found my model that I had imported from Lobe, and used the ouputs from the Compose 3 action (base64 to binary). I have no idea what the differences are, but I just acknowledge that this is how it has to be done.. I am sure there are other ways, but that someone will have to teach me in the future.

All in all it actually worked really well. As you can see here I added all types of outputs to learn from the data, but it was exactly as expected when taking a picture of Winnie the Poo 😊 The bear was categorized as good, and my model was working.

One can wonder why I chose to use Lobe for this, when the AI Builder has the training functionality included within the Power Platform. For my simple case it wouldn’t really matter what I chose to use, I just wanted to test out the newest tech.

When working with larger scale (numbers) of images, Lobe seems to be a lot easier for the importing/tagging/training of the model. Everything runs locally, so the speed of training and testing is a lot faster also. It’s also simple to retrain the model an upload again. This being a hackathon it was important to try new things out 😊

I talked to Joe Fernandez from the AI builder team, and he pointed me to some resources that are nice to checkout regarding this topic.

https://myignite.microsoft.com/sessions/a5da5404-6a25-4428-b4d0-9aba67076a08 <- forward to 11:50 for info regarding the AI Builder